In a fascinating move, Tesla has moved to turn on in-car monitoring for drivers using Autopilot. This is meant to contain the behavioral risk created by Autopilot.

There’s a lot interesting elements to unpack:

A refresher of behavioral risk versus product risk

What Autopilot does and how it creates the opportunity for behavioral risk

Why the beta aspect of Full Self Driving is a key consideration

Potential privacy risk created by this move

The difference between Behavioral Risk and Product Risk

I delineated behavioral risk vs product risk while talking about Peloton's Tread+ situation.

Behavioral risk comes from users of a product using the product incorrectly or maliciously. The risk creating problem is people's behavior. You mitigate this by clearly and visibly messaging proper behavior and having mechanisms to detect incorrect behavior.

Product risk come from products being incorrectly designed and failing during reasonable use. You mitigate this with a safety informed product design process and very rigorous testing processes.

Example of behavioral risk: People falling asleep while driving.

Example of product risk: A SpaceX rocketship blowing up during liftoff.

How Autopilot's convenience creates opportunity for behavioral risk

Autopilot is really interesting because it's a convenience feature that also has impressive safety capabilities. It lowers the cognitive load of driving by taking over many (but not all!) tasks. The vehicle safely navigates on the highway and frequently takes evasive maneuvers even before drivers are aware of an impending risk. Tesla shares in its safety reports that collision rates with Autopilot engaged are half of that of when it’s not engaged*.

However, Autopilot is so successful in its value proposition that it can inadvertently create behavioral risk when it's misused. Tesla owners have uploaded YouTube videos of the car driving while they sit in the back seat. Many people have been hurt or had near-misses (6:08) by over-trusting the system. In a recent fatal collision, the driver had previously posted videos of himself being driven without his hands on the wheel.

Tesla is turning on the indoor camera to mitigate some of this behavioral risk and have people pay attention. If people know they are being monitored, their behavior generally improves. Especially if it has feedback and consequences. The indoor footage will be available for review, which will be especially helpful for post-accident investigative purposes and liability determination.

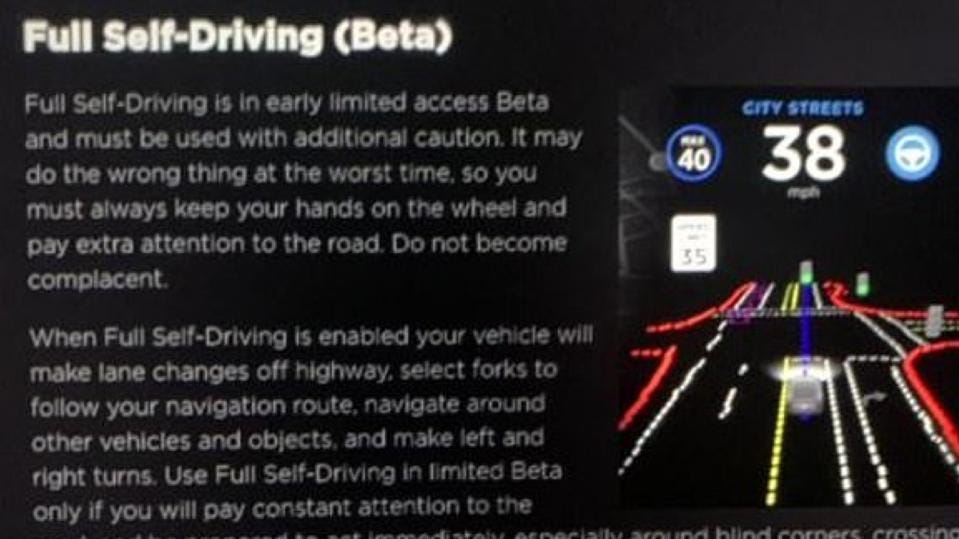

How Full Self Driving factors in

A key motivation for Tesla here is likely the planned roll out of full self driving mode. Right now Full Self Driving is still in very limited availability, but Tesla wants to roll it out in pursuit of the larger goal of autonomy. Autonomy is of very significant value to customers (sign me up!), but also to Tesla. It's high-margin software rather than hardware. If bought, Full Self Driving probably nearly doubles a car's gross margin. Full Self Driving is $10k whereas a model 3's overall gross margin is around $13k (assuming a $45k price point and 30% gross margin).

Enter the Autopilot risks. If customers routinely abuse it and it's perceived that Tesla isn't doing enough, which has previously been alleged in a lawsuit, then regulatory scrutiny is around the corner. Which is it not new for Tesla. However, it's a major problem if that regulatory scrutiny results in Tesla having to slow down or pause the full self driving rollout. Hence, Tesla wants to contain that risk.

The new risk - privacy

Tesla's mitigation of the Autopilot-induced behavioral risks is good for the customer's safety and also regulatory risk. But for the Brand? Not so sure.

This could be considered an invasion of privacy. People don't like cameras that they don't feel like they can control. And if someone has paid $40k+ for a Tesla and now they feel like they have loss some freedom, it could be negative.

Tesla can contain, not eliminate, this risk too by leveraging their brand and connected relationship with customers. They need to clearly communicate when the camera turns on, where the footage goes (stays in car), and when the footage is deleted. If they do that, there will be less outrage and people could acclimate.

In closing

Overall, it’s an interesting move Tesla.

You're changing one feature's use to further the long term mission.

Preet

p.s. There is a whole YouTube channel called Wham Baam Teslacam. It has 185k subscribers! If every subscriber was a Tesla owner, that’s > 10% of owners.

*Tesla doesn’t clarify if Autopilot is used more on highways or not. City driving has more collisions per mile than Highway. Accordingly this could be a cherrypicked stat. My experience leads me to believe there is some bias, but I still expect Autopilot outperforms.

Disclosure: I have been a Tesla shareholder before and may be again.